Working with scientific literature - Impact, quality and finding

Motivation

In the previous chapter on working with scientific literature we focused on summarizing scientific literature and using outlines and other techniques as well as citing scientific literature correctly in our scientific writings.

In this chapter we focus on the question how to identify the relevant scientific literature for our objectives.

The motivation for understanding how to find relevant literature arises from the fact that anyone engaging with primary scientific research must address the question of how to identify the most important papers in a field. These can be defined as publications that present the highest quality of scientific research.

This is particularly relevant, for example, when developing a new project that builds upon the work of others. In such cases, we must ensure that the previous studies influencing our experimental design and project development are of the highest quality. Similarly, when writing a policy brief intended to inform political decisions, it is essential that the recommendations reflect the state of the art and are based on the best available scientific evidence.

Therefore, we need to consider which criteria can be used to evaluate the quality of scientific research and how to decide which of the many available publications on a given topic are worth reading and including in one’s own work.

This is the focus of this chapter. We will examine not only how to analyze the scientific literature itself, but also how to assess the quality of researchers and critically discuss formal methods used to evaluate the impact and quality of scientific output. Finally, we will address common problems in the scientific literature, particularly errors and cases of fraud.

Scientists frequently evaluate the scientific writing of their colleagues, for example, when reviewing grant applications or conducting peer reviews of scientific publications.

Here’s your text with improved grammar, clarity, and flow — while fully preserving your Markdown formatting:

Impact and quality of scientific literature

Measuring the impact of science

Science is an expensive endeavor, and it is increasingly evaluated in terms of its impact on society and the economy. Scientists, research institutes, and universities are assessed based on their scientific productivity. For this reason, various measures have been developed to quantify scientific output. These include:

- the amount of money obtained from external, competitive funding sources

- the number and quality of scientific publications

- the number of patents

The impact of scientific publications

Scientific publications are arguably the most important outcome of scientific research because they undergo peer review. Only after successfully passing this review process does a paper appear in a scientific journal. It can then be considered part of the scientific literature and the broader body of human knowledge.

One way to evaluate scientific quality is through bibliometric measures, i.e., the quantification of various aspects of the scientific literature—such as how often a publication has been cited by others. Under this paradigm, the quality and impact of scientific publications are represented by metrics that aim to reflect their influence. A standard bibliometric measure is the impact factor, which is defined as the average number of citations received by articles published in a journal over a given period of time. Impact factors can be calculated not only for scientific journals, but also for research institutes, individual researchers, and even for specific papers.

The impact factor (IF)

In its initial definition, the impact factor (IF), is a measure reflecting the average number of citations of articles published in peer-reviewed scientific journals. It is frequently used as a proxy for the relative importance of a journal within its field, with journals with higher impact factors deemed to be more important than those with lower ones. The impact factor was devised by Eugene Garfield, the founder of the Institute for Scientific Information (ISI), now part Clarivate Analytics. Impact factors are calculated yearly for those journals that are indexed in Clarivate’s Journal Citation Reports.1

1 These journals are frequently called ‘indexed journals’, which is frequently used in marketing of journals of lower quality, so called predatory journals.

How is the IF calculated? In a given year, the impact factor of a journal is the average number of citations to those papers that were published during the two preceding years. For example, the 2008 impact factor of a journal would be calculated as follows:

- \(A\) = the number of times articles published in 2006 and 2007 were cited by indexed journals during 2008

- \(B\) = the total number of ‘citable items’ published in 2006 and 2007

- The impact factor for 2008 is then \(= A/B\)

In summary, the impact factor can be expressed by the following equation:

\[\begin{equation} \label{if} \text{IF} = \frac{\text{Number of citations during year of articles from last two years}}{\text{Number of articles in the last two years}} \end{equation}\]

The impact factors for journals of a particular field can be downloaded the the Journal Citation Reports, which requires a subscription.

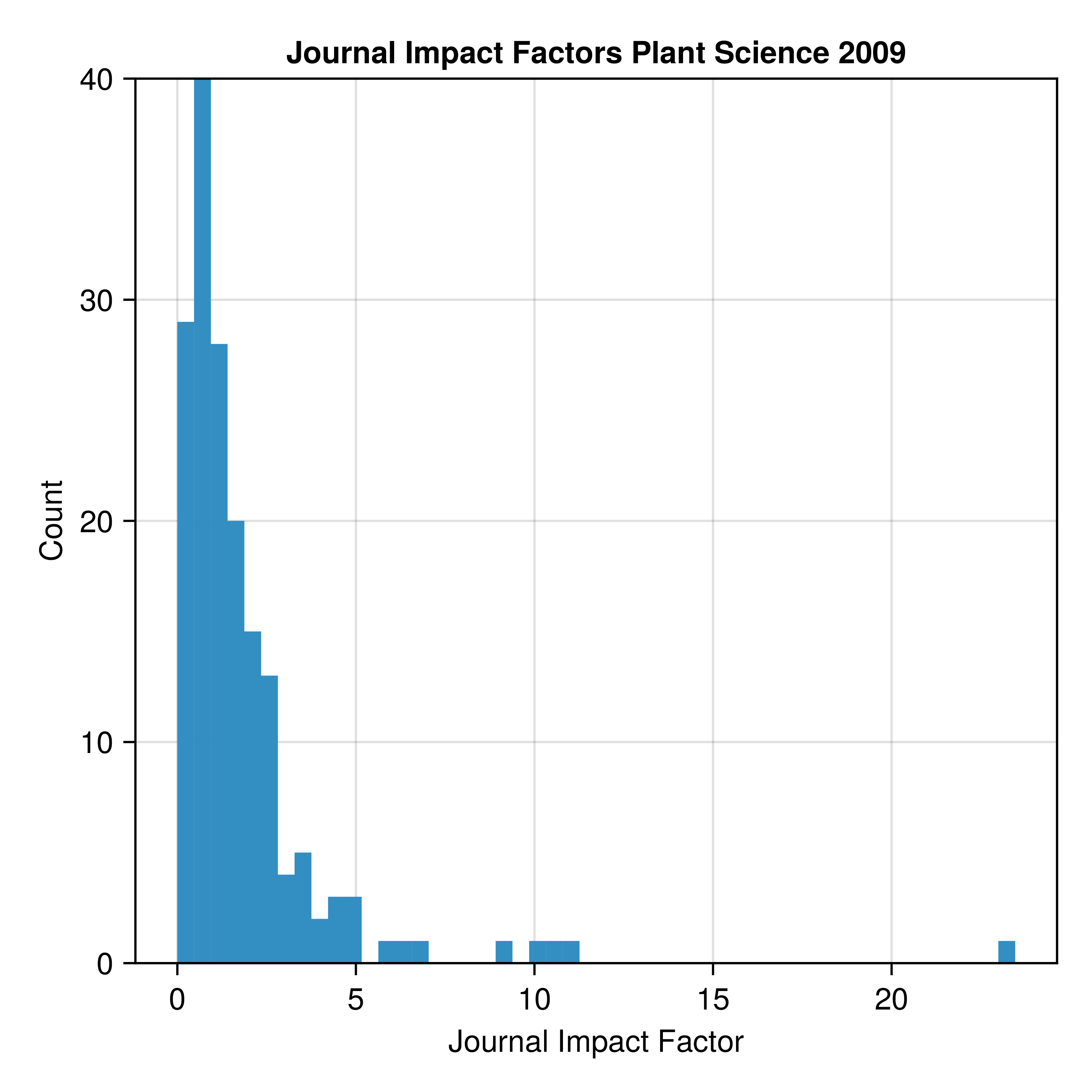

Figure 1 shows the distribution impact factors of journals in the field of ‘plant science’ in the year 2009.

The distribution is log-normal, which indicates that most journals have a rather low impact, and only very few journals have a high impact factor. The top five journals in the figure are review journals: Annual review of Plant Biology, Annual Review of Phytopathology, Current Opinion on Plant Biology, Trends in Plant Science. These journals only publish review papers and no original research. The first journal with mainly original research papers is Plant Cell with an impact factor of 9.88.

Bibliographic measures for journals

Multiple other bibliographic measures for journals have been calculated that we do not consider further.

However, it is very interesting to analyse the distribution of citation numbers of articles within journals.

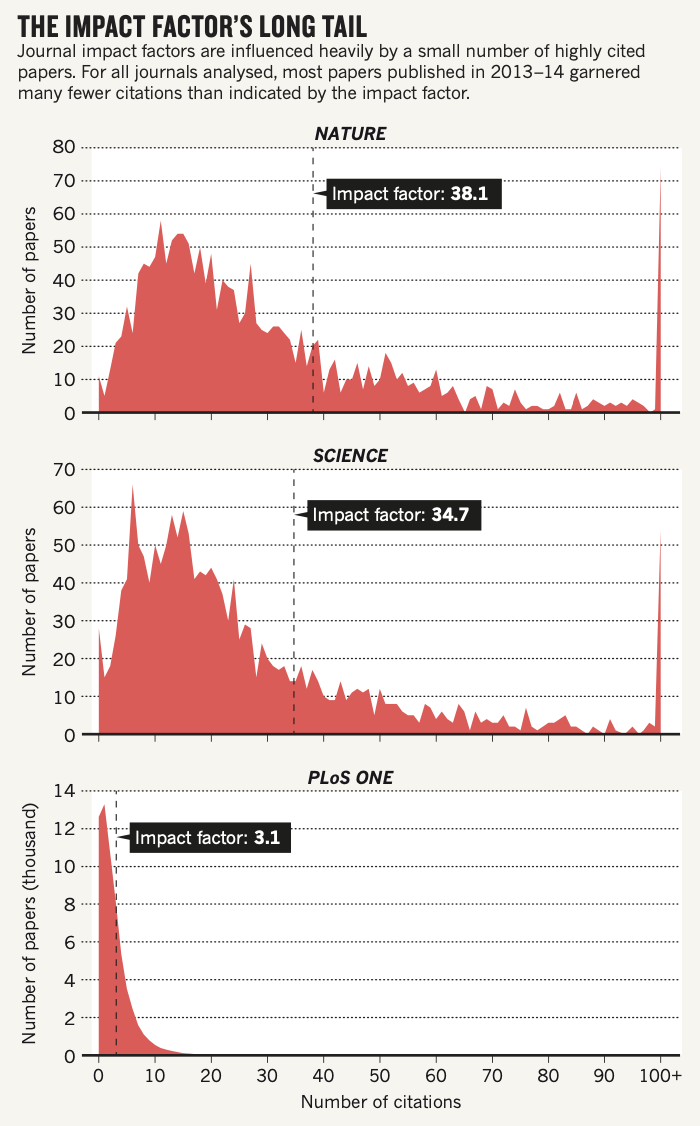

The distribution of numbers of citations in the three journals Nature, Science and PLoS ONE shows a distinct pattern (Figure 6).

For all three journals, the distribution again follows a log-normal distribution, which is characterized by a large number of articles with a low citation count, and a much smaller proportion of articles with a high citation count. Such a skewed distribution is also called a ‘long tailed’ distribution.

On additional aspect is the fairly large number of papers with a very high number of citations (>100) at the right end of the distribution, which are missing from PLoS ONE. It is one key mathematical aspect of the log-normal distribution that the biased distribution with a fairly small proportion of articles with a very high number of citations drives the mean value (i.e., the IF), and they are mainly responsible for the more than 10-fold difference of the IF between Nature and Science vs. PLoS ONE. As discussed below, this mathematical property is one of the main criticisms of the IF.

Measuring the Impact of individual researchers

It is also possible to measure and compare the impact of individual scientists, which is now common practice in the hiring process or in the evaluation grant proposal applications There are several measures to evaluate the impact of individual scientists:

- Total number of peer-reviewed articles published

-

This measure is easily calculated from records of the author in databases

- Average impact factor of journals

- The impact factor of the journals in which a particular person has published articles. This use is widespread, but controversial because there is a wide variation from article to article within a single journal. (Figure 6).

- Total number of citations of a scientist’s papers

- This can be also easily extracted from curated or automated websites

- Hirsch-Index (\(h\) index)

- An \(h\) value of 15 means that an author has published 15 papers which were cited at least 15 times. It was shown that this index may be able to analyse the scientific productivity of an individual at various career stages because expectation values can be formulated.

- \(b\) Index

- The \(h\) index divided by the numbers of years a researcher has spent in science.

Many more statistics have been devised that allow to evaluate scientists without actually having to read their papers.

The \(h\) index explained

The \(h\) ist widely used to measure the scientific output of individual researchers. It is named after its inventor, the phycisist Jorge E. Hirsch (Hirsch, 2005) who developed this measure to enable the ranking of individual scientists.2

2 See also Wikipedia

It has the following definition:

- \(n\) articles that are cited at least \(n\)-times

- 1 article 1 times cited: \(h=1\)

- 4 articles 4 times cited: \(h=4\)

Calculate the \(h\)-index of a scientist with the following citation counts:

- 1st article, 19 times cited

- 2nd article, 8 times cited

- 3rd article, 4 times cited

- 4th article, 20 times cited

- 5th article, 10 times cited

Criticism of impact factors

The main purpose of impact factors is that they allow a formalized, possibly objective and essentially automated assessment of individual scientists, research institutions or scientific journals in the vast and extremely diverse scientific enterprise. Hence it used to be considered to be an efficient system of reputation building.

However, any such formal system is liable to abuse by adapting to the rules of the game and utilizing (or manipulating) them to maximise ones fitness in this system.3 For example, the journal impact factor and not the scientific direction of a journal may be used in the decision to which journal a scientific article should be submitted. Numerous criticisms have been made of the use of impact factors. The most basic arguments against using impact factors are

3 However, like any other metric used for evaluation, Goodhart’s law applies to the impact factor: “When a measure becomes a target, it ceases to be a good measure”. See Wikipedia.

- Quantity of publications does not imply quality

- The real value of research becomes often obvious much later

- Differences in the impact of publications: A single researcher may produce only a single, but outstanding paper in his or her lifetime and not much else, whereas a mediocre research may publish many papers with mediocre content.

- Counting impact factors puts a big administrative burden on scientists (although this can be done automatically, e.g., with Google Scholar)

- All measures raise the issue of authorship on scientific papers. It is highly advantageous to be a co-author on many papers, even though one’s the contribution to a paper is be minimal. This is because indices as as the IF do not count the order of authorship or the relative contribution of each author.

Besides the more general debate on the usefulness of citation metrics, criticisms mainly concern the validity of the impact factor, possible manipulation, and its misuse.

Low correlation between impact factor and research quality

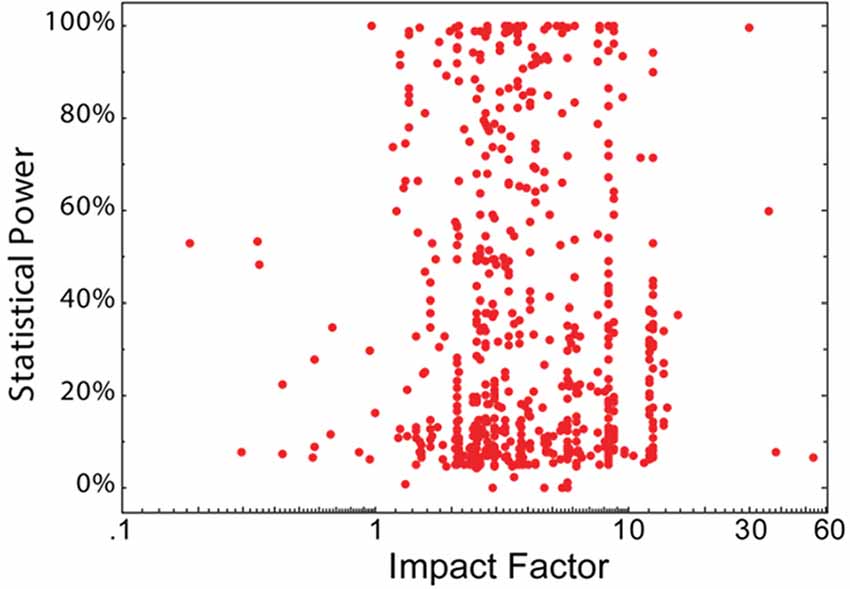

One of the main cricitisms of metrics such as the IF is the low correlation between the value of an indicator and research quality.

Quantitative analyses of the statistical power of studies (which is a measure for the quality of the underlying experimental design) and the journal impact factor (IF) in which the research was published revealed a low or no correlation between both measures. This is shown in Figure 2 for the field of neuroscience. Therefore, if the statistical power of a study is taken as a measure of the quality (and reproducibility) of a study, the impact factor is clearly not correlated to its quality.

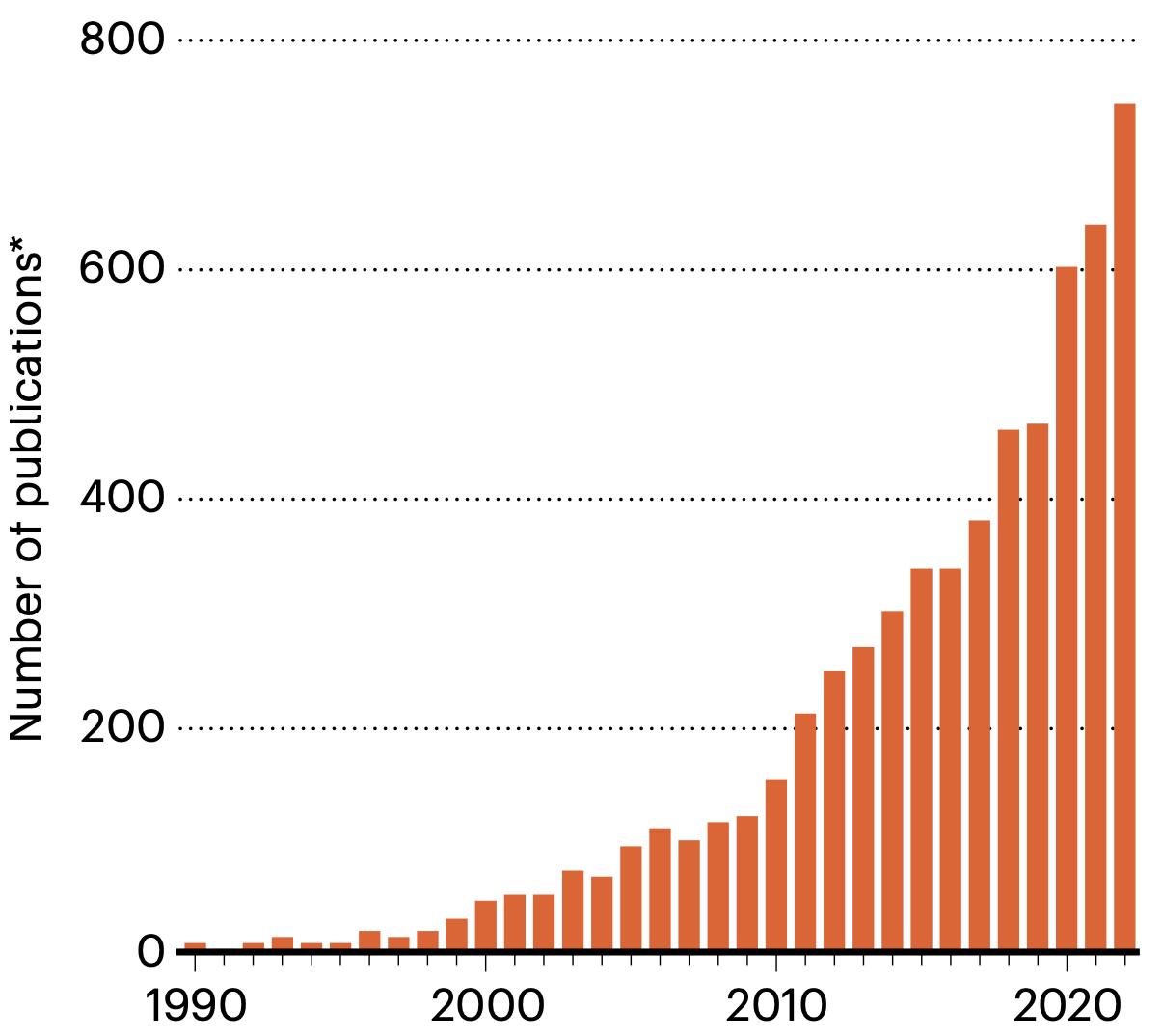

For the field of crop science, a similar problem has been outlined in a recent commentary (Khaipho-Burch et al., 2023). It criticises the fact that many studies publish a substantial increase of crop yield by the genetic manipulation (e.g., using genome editing) by 10% up to 68%. The number of studies claiming substantial yield increases by gene manipulation is rapidly increasing (Figure 3).

Such a stepwise increase in yield is much high than can be accomplished by conventional plant breeding based on cross-breeding of elite lines. Frequently, such studies are published in journals with a high impact factor and accompanied by sensationalist press releases (“A gene that may feed the world”, etc.), e.g. Wei et al. (2022) or De Souza et al. (2022).

However, in many cases the yield differences were obtained under laboratory or greenhouse conditions, but were not validated under realistic field trials. For this reason, the authors of the commentary provide five criteria that would justify such claims. Among others, they include realistic definitions of yield, a validation of the yield gain in statistically powerful multi-year and multi-location experiments, and cultivation conditions that reflect those on farmer’s fields.

In summary, the current publishing and academic reward system favors the publication in high impact journals, which frequently results in bad science or exaggerated claims. For this reason, many scientists, scientific societies and research funding organisations think about alternatives.

A possible alternative to impact factors?

There are many criticisms about using simple metrics to measure the quality of scientists and scientific work. Good historical examples where such metrics would have failed are described in this blog post.

On the other hand, the number of scientific publications is growing very rapidly, and no scientist can follow the complete body of scientific literature within a field of specialty and even less so outside. Therefore, some measure of importance and impact is necessary to evaluate the key papers in a scientific field. The impact factor of a paper and the journal in which it is published is a first step to find out.

To counter the publication of too many scientific papers with too little impact of each, scientific funding agency such as the Deutsche Forschungsgemeinschaft now ask to list only the ten most important publications in a grant proposal and not all publications of the scientist who submits a proposal for a research grant.4 The expectation is that scientist write now longer papers with more complete datasets and analyses, which subsequently may result in a higher impact. In other words, the quality and not the quantity of research is going to become important.

4 See this link

The Declaration on Research Assessment (DORA) is an initiative aimed at improving the ways research outputs are evaluated. It emphasizes moving away from traditional metrics like journal impact factors and encourages assessing research based on its quality, impact, and contributions to the field, regardless of the venue of publication. DORA advocates for more transparent, inclusive, and comprehensive research evaluation practices across disciplines. More information can be found at this link. Many institutions subscribe to this initiative, but it is unclear to what degree this commitment has a substantial impact on the hiring committees at academic institutions.

Retractions of scientific publications

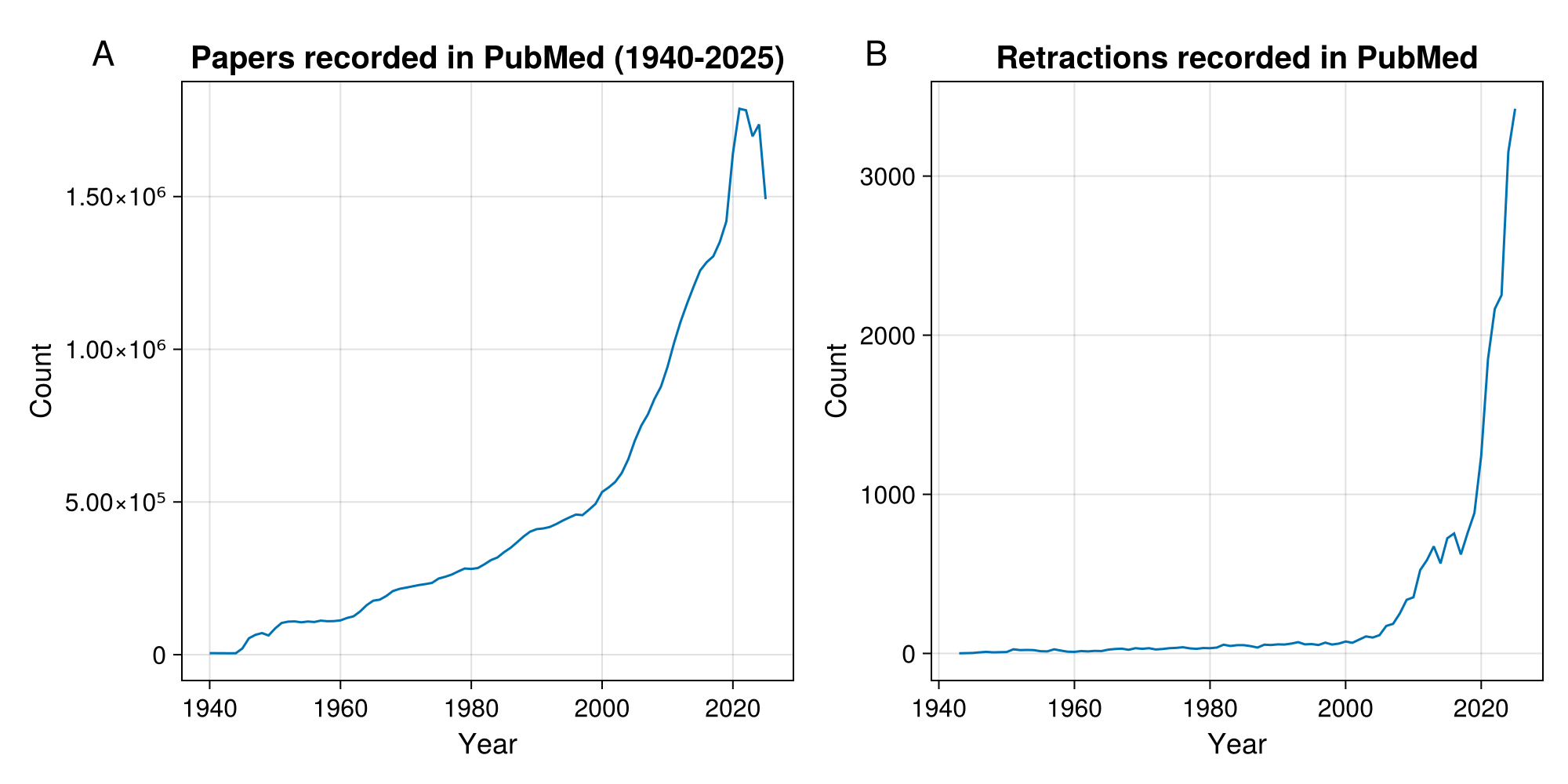

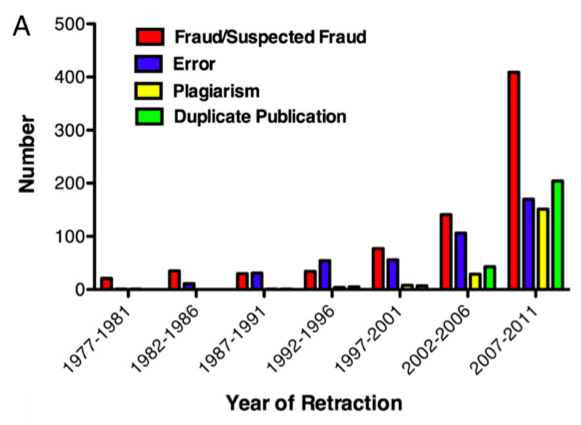

The growing numbers of papers per year is accompanied by a similarly fast growth of retracted papers (Figure 4). This is due to several reasons:

- The growing awareness for falsifications.

- New techniques to recognize the manipulation of images.

- A stronger competition between research groups that gives a higher chance that critical and important experiments are being repeated.

A study that investigated the cause of paper retractions (Fang et al., 2012) showed that a majority of retractions result from fraud or suspected fraud.

There is also a watch blog that follows up retractions due to scientific fraud or other problems 5. There seem to be a much higher proportion of retractions in the field of biomedicine than in agricultural research.

It is important to note that wrong, falsified or controversial papers also can accumulate high impact factors. Hence, a high impact factor is not necessarily a sign of a high scientific quality.

Finding scientific literature

After investigating how to evaluate the quality of scientific literature, it is also important to discuss how to find relevant literature, particularly high-quality scientific literature. Whether you are conducting research to understand the state of the art in your field or writing your thesis or publication, finding the most up-to-date and relevant literature is crucial.

The field of literature search is evolving rapidly. In the past, one approach was to regularly scan the most important journals in the field, often by subscribing to email notifications of new issues, which allowed for a consistent review of interesting and relevant papers. Another approach was to search curated literature databases such as Web of Science or Scopus, both of which require a subscription and are owned by major publishing companies. The University of Hohenheim has subscribed to Scopus (Link), and therefore you can use it for your literature search.

However, the field is changing quickly due to the increasing availability of public information and the growing influence of artificial intelligence. Numerous companies now offer AI-based tools to search for relevant literature, providing criteria to assess the relevance of matches—such as the number of citations a publication has generated or through citation networks that help identify key publications in a field.

In the following, a few methods are presented that you can explore for yourself.

Google Scholar

The first approach is almost considered a classical method today: using a search engine. Google Scholar (Link) is arguably the largest and most effective search engine for finding relevant papers. Most importantly, it is free to use, and instead of relying on manual curation, it leverages Google’s search algorithms to identify relevant publications.

A key feature of Google Scholar is the ability to filter searches by specific years, and once you’ve found a publication, you can assess its quality by reviewing its citation count—how often it has been cited by others.

Additionally, many scientists maintain a Google Scholar profile where they list their publications. Google automatically calculates an \(h\)-index6, which serves as a useful indicator of a researcher’s scientific impact and the quality of their work—in other words, how trustworthy their research is.

6 See, for example https://scholar.google.de/citations?user=TN3bbNwAAAAJ&hl=de

ResearchRabbit

ResearchRabbit (researchrabbit.ai) searches public databases similar to GoogleScholar and analyses citation networks. It has a graphical presentation of these networks that allows to identify publications that are well connected to other publications and therefore of potential importance. It can be linked easily with Zotero and can contribute very well to establishing collections of literature on a given topic.

OpenKnowledgeMaps

Find the top 100 papers relevant to your topic using citation networks: https://openknowledgemaps.org.

Here, it is important to have a good and precise query for terms used in the search.

Perplexity

Perplexity (perplexity.ai) is a search engine that combines classical websearch with artificial intelligence. It is becoming very popular among researchers. Perplexity provides a browser interface, but also an app that can be locally installed (Comet).

ChatGPT Research

For subscribers to ChatGPT Plus or Pro, ChatGPT Research is available. It searches the web on a certain topic and provides references. However, it should be noted that it is hard to validate whether it has found the relevant and the complete set articles on a topic, and also which criteria it has used for literature search. Therefore, use this function with caution.

Further AI-based methods for literature search

The field of methods based on searching for scientific literature is rapidly evolving. For this reason, we provide only a few links:

Inciteful can be linked with Zotero and allows to search for the relevant literature on a given topic. See this blog post for a description and links to other, potentially usefule AI-based tools for literature search.

Semantic Scholar is another tool for literature searches.

In Bachelor’s and Master’s theses, it is often noticeable that students do not cite the appropriate literature.

For example, instead of citing peer-reviewed research publications or reviews, students cite websites, newspaper articles, or articles from non-peer-reviewed sources. Also, quite frequently, articles from unknown or predatory research journals are cited.

This strongly suggests that the students have not engaged seriously with the subject matter and have mostly compiled their references from regular Google searches—not from Google Scholar searches.

This may lead to a negative assessment of a thesis.

Therefore, an important tip for your Master’s thesis: Take the time to search for studies that are either published in the most visible journals or are the most highly cited in the field. Review articles are also acceptable, and you should cite these.

Ideally, you should fully read these articles to grasp their content.

Another important tip is not to simply copy figures from the internet or from articles, such as in the introduction of a Master’s thesis. Instead, consider how you can, with minimal effort, create your own diagram or infographic that demonstrates that you have understood, reflected on, and worked through the topic.

Summary

- Bibliometric measures are an automated and standardized way of evaluating research.

- The most widely used measure is the impact factor and various variants of it.

- The impact factor is defined as the number of citations a publication receives per time unit.

- Bibliometric assessment of scientific impact has many problems and is frequently criticized.

- The use of impact factors is based solely on formal criteria; however, the content and therefore creativity and impact is not assessed.

- Wrong or controversial papers can also get high impact factors.

- Alternative measures to evaluate the quality of research publications are being developed that attempt to focus on the content and less on formal criteria for the evaluation.

- Multiple engines and approaches for searching literature are being developed: Curated databases, classical websearches, or more recently searches based on artificial intelligence using large language models. It is important to understand the limitations of each approach.

Key concepts

Further reading

Study questions

- Understanding Evaluation Metrics: What is the purpose of bibliometric measures like the impact factor and \(h\)-index, and what are the main criticisms against their use in evaluating scientific quality?

- Critical Analysis: Why does a high impact factor not necessarily indicate high research quality, and what statistical properties of citation distributions contribute to this issue?

- Ethics and Reliability: What are the most common reasons for paper retractions, and what do these reveal about challenges in maintaining scientific integrity?

- Quality over Quantity: How does the Declaration on Research Assessment (DORA) propose to reform current research evaluation systems, and what are its main principles?

- Practical Application: When conducting a literature search for your thesis, how can you distinguish between high-quality sources (e.g., peer-reviewed journals) and low-quality or predatory publications?

- Digital Literacy: Compare traditional literature databases (e.g., Scopus, Web of Science) with AI-based search tools (e.g., Google Scholar, ResearchRabbit, Perplexity). What are the strengths and limitations of each approach?

In-class activities

World Café: How do we find and judge good science?

The following 20-minute World Café invites students to share their experiences and challenges in searching for, selecting, and evaluating scientific literature. It aims to stimulate critical reflection on how publication quality is judged and what systemic issues influence scientific publishing today.

- Duration: 20 minutes

- Group size: 4–5 students per table

Objective:

To reflect on how students searched for and selected literature in their Bachelor theses, discuss criteria for scientific quality and impact, and critically examine the current publication system.

1. Setup (2 minutes)

- Arrange the classroom into 3–4 small discussion tables, each with paper or a shared digital note pad.

- Assign one table host per group who stays during rotations and summarizes previous discussions.

- Each table discusses one guiding question (below).

2. Round 1 – Personal Experience (6 minutes)

Question 1:

> By which criteria and with which tools did you select the references that you cited in your Bachelor thesis?

Prompts:

- How did you find your sources? - Did criteria did you consider for selection of references? - What challenges did you face finding suitable references?

Goal:

Share and compare individual search habits and tools for literature discovery.

3. Round 2 – Evaluating Quality (6 minutes)

Question 2:

> Which criteria would you apply to decide whether a publication is of high quality, relevant for your field, or has a high impact?

Prompts:

- What signals indicate trustworthiness in your opinion? - How do you decide if the findings of are robust?

Goal:

Develop a shared list of criteria students actually use or would like to use to judge publication quality.

4. Round 3 – Critical Reflection (6 minutes)

Question 3:

> Which problems do you recognize in the current scientific publication system with respect to the quality of publications?

Prompts:

- Which aspects of the scientific publication system do you consider problematic? - How does competition for impact and funding shape the literature?

- What reforms could improve the system?

Goal:

Identify systemic problems and discuss possible improvements.

5. Wrap-Up and Synthesis (3–4 minutes)

- Each table host summarizes the main insights from their table in one minute.

- The instructor collects recurring themes

- Short closing reflection:

> “What will you do differently next time you search for literature, i.e., in your master thesis?”

Exercise: Evaluating the Potential of Large-Scale Organic Agriculture

Scenario: You are an intern for a member of parliament who is considering a proposal to transition the agricultural system to fully organic, based on polling data indicating public support and the recognized benefits for long-term sustainability.

Your task is to assess the scientific evidence to determine whether large-scale organic agriculture can realistically achieve these goals. You are required to write a short, 2-page policy paper, referencing no more than five scientific publications.

Please consider the following questions:

- Criteria for Selection: What formal and informal criteria will you use to select the five papers to include in your policy paper?

- Relevance of Studies: What will be your key criteria for determining the relevance of a scientific study for this policy paper?

- Summarization and Referencing: How will you summarize and reference the selected studies to convey the necessary information effectively?

- Addressing Unreferenced Studies: How will you account for the remaining studies that you cannot reference in the policy paper?

Discussion: Problems with the impact factor

The impact factor of a journal in which a scientist publishes is often misused to evaluate the importance of an individual publication or evaluate an individual researcher. This does not work well since a small number of publications are cited much more than the majority - for example, about 90% of Nature’s 2004 impact factor was based on only a quarter of its publications, and thus the importance of any one publication will be different from, and in most cases less than, the overall number (Figure 6).

The impact factor, however, averages over all articles and thus underestimates the citations of the most cited articles while exaggerating the number of citations of the majority of articles.

Discuss the following questions:

- What is a characteristic of the distribution of the impact factors that all three journals have in common?

- Why does the shape of the distribution carry only limited information about the average number of citations over two years (i.e., the impact factor) of a random article published in the journal?

- Which reasons can you think of that may explain the differences in the impact factors of the three journals in Figure 6?

- Can you summarize the key problems of the impact factor as a measure of scientific quality?